Your source for everything mobile UA, from the basics to contentious standards, the glossary can help and inform both aspiring growth managers and experienced mobile app developers

Real-time bidding

Data Activation in Mobile UA -> Page 1 of

Data Activation in Mobile UA

What is Data Activation?

Data activation, at its broadest, means using data by making actions based on its implications. In other words, generating value and insights, recognizing behaviors and trends in the data, and using them to lead to improved performance, whatever the task may be.

In our field, we refer to data activation as the use of aggregated data for purposes of user acquisition, which translates into having the ability to extrapolate data effectively. Since user acquisition is at the forefront of every app (and any business for that matter) and the competition is constantly growing, the challenge to acquire new users grows.

As the competition for acquiring new users increases, so does the cost associated with it. It’s much more expensive to acquire new users than it is to retain existing ones. Overcoming the challenge of acquisition costs offers great rewards, such as scale, increased revenues, and eventual company growth.

Why is Data Activation Vital for Your User Acquisition?

These days there are infinite data. Running successful UA is rooted in the way you use yours.

When bidding on OpenRTB inventory, in a single bid request, you can get data on the type and model of the user’s device, OS version, location, local time, and much more. Using data enrichment and combining aggregated data makes your options virtually limitless. The central question, and how you differentiate yourself from the competition, is in the way you use, or more accurately, look at that data.

All marketing efforts, way before the digital and mobile apps world, focused on understanding the customer and its needs. Though the technology changed, the ideas remain the same. Understanding trends, patterns, and users’ behaviors are key to running successful UA campaigns.

This behavior can be understood by focusing your analysis on an existing pool of users to predict future behaviors and recognize potential new users. In digital marketing, it is often referred to as lookalikes.

It’s All About the Features

Depending on the technologies at use (with the year being 2020 we assume the technology is very much in existence and in use) you have a lot of data. You’ve recognized patterns and behaviors within these data, and now it’s time to act upon it and generate predictions or make decisions that would help acquire new users effectively.

The question is how to derive specific insights and implement them from these immense quantities of data. There’s a tendency to forget there are users behind the data and commit solely to the numbers at hand, recognizing trends and following them, without rationalizing them. Which, in the long-run, creates problems.

When machine-learning algorithms are in use, the way to derive meaning from the mass is by creating features that dictate the algorithms on how to use the data at hand. These features bridge between data and the users behind them. A feature can be as simple as the time of day or the day of the week, and complex as the current session length or the contextual relationship between the user and the promoted application.

Time-of-day and Day of the week

To explain this point, we’ll use the time-of-day data and show how you can create different features using the same data. You can create a time-of-day feature that refers to this information on an hourly basis (i.e there are 24 units a day) and let the algorithm do its thing (target users by their most active hour units, for example). This would be an example of a feature in a machine-learning algorithm that follows the data blindly, without accounting for actual user behavior.

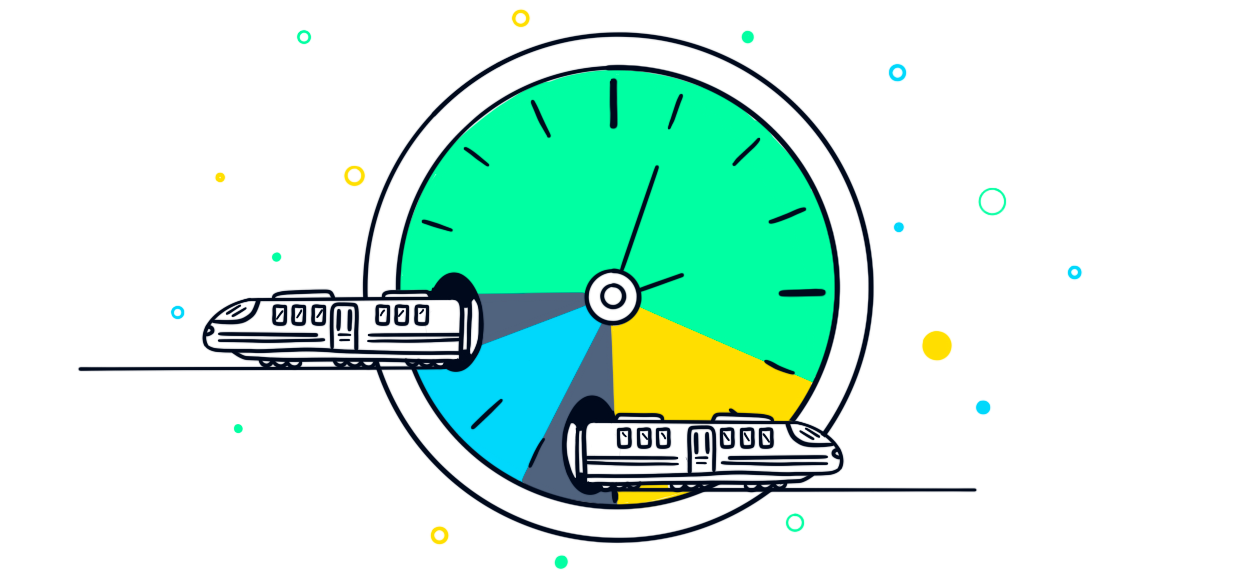

On the other hand, you can look at the data and combine known human behaviors that correlate with the data. For example, according to the data, users are mostly inactive during the night, while they are at their most active in the morning (while they are commuting to work), then they are somewhat active during the workday, and active again in their evenings.

Instead of creating 24 single hour units, you can create, for example, 4 units, each representing a different level of activity during the day, and use these data when targeting. Feeding a model with this kind of feature makes a lot more sense, in the long run, than blindly following the most basic grouping of data (i.e, the 24 single-hour units).

An example of how features can become more convoluted and interesting can be by creating a feature that combines the time of day unit with movement (i.e, a location change within a certain time of day). Assuming that if it’s rush hour and users are in movement, they’re probably commuting to work and are at their most active, at least for the morning portion of the day.

This means that the feature not only follows the data and targets accordingly, but it also combines human behaviors and can make nuanced decisions (i.e targeting all users in rush hour is not as accurate as targeting all users at rush hour who are also in movement). The better the features combine between data and behavior, the easier it is to understand user behavior and actually predict future behaviors.

If we continue the line of time of day – building a feature regarding the time of day and basing it on data collected on weekdays, then running it on the weekends would result in very different outcomes.

While midweek users were most active in the morning unit, when they were commuting to work, on the weekend they might be busy, asleep, with their family, or running errands these same hours.

Had the feature included a location change (or a day of the week grouping) – the results would have been better and lookalikes would play their part. Though being too specific also has its downfalls. The problem with creating specific features is having them become limiting, by overfitting the model.

The Problem With Overfitting

Overfitting is what happens when the model learns the details and noise in the training data to the extent that it negatively affects the performance of the model on new data. Instead of correctly generalizing the data and following trends, it follows random fluctuations and noise and applies what it’d learned on new data.

The process of building these features is challenging and requires expertise both in being able to connect the data points to the business problem at hand, as well as ensuring the model is representative and not overfitted. When done right, the fruits outweigh the labor by a significant margin.

Blackbox Approaches

The term Blackbox, in the context of machine-learning algorithms, refers to the type of models that are uninterpretable by humans (such as neural networks).

At its core, a Blackbox model works similarly to ML models that can be interpreted (like logistic regression) but they are exponentially harder to understand since their iterative and sometimes recursive nature learns complex feature relationships and estimating the importance of each feature and its relation to the other features is essentially impossible.

The way to evaluate a Blackbox model is by its ability to predict – seeing if it actually is able to effectively make the predictions it was defined to, in your production setting.

Interpretable models and their decisions, on the other hand, can be relatively easily analyzed to decide if they are making decisions that seem correct when considering the business problem at hand, and then further explained to decision-makers. They may rely on non-interpretable attributes, but can still be understood by their original attributes.

In reality, Blackbox models, like neural networks (that were originally designed by computer scientists together with neurological scientists in order to mimic the way the human brain works), can definitely generate more accurate models than simpler, interpretable ones, but that isn’t always the case.

Sometimes, the simpler models outperform the complex ones due to the fact that the data scientist has more control over what happens behind the scenes and can make little patches and tweaks to fine-tune the model to the problem at hand.

When planning to tackle a business problem with machine learning, you’ll need to first establish if it’s important to you to be able to interpret the decisions guided by the model, and, of course, your production environment limitations, as the more advanced Blackbox models require stronger computational resources to infer.

Predictive Performance Metrics

Using machine learning models, advertisers can predict the eventual lifetime value for each user, from their first day of using the app, and in many cases with incredible accuracy. Implementing this data can help stir campaign budgets towards the most successful marketing channels early on, allowing the UA team to effectively scan the entire ecosystem for any source that can meet goals and expedite growth.

Data activation, be it in mobile campaigns or traditional marketing campaigns, is based on current and past data to predict future outcomes. This idea relates to predictive KPIs. We’ve discussed the importance of long-term predictive KPIs such as LTV in our Cornerstone KPIs for Mobile UA entry.